ouch, bandwidth costs

As much as I love Amazon S3, it's not quite as price-effective as i'd like it to be.

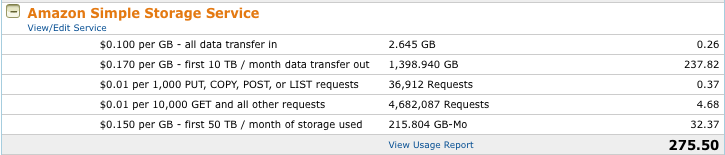

For example, this number has been creeping upwards lately (much to my concern):

Note that this is just my storage costs - it doesn't factor in my server costs. Given the fact that Tabulas is a free project (for which I should really start collecting some money again shortly), paying close to $700/month isn't ideal. To that end, I've decommissioned one of the extra servers and trying to figure out a way to reign in the storage costs.

So today, I took the first pass at this by writing a short PHP wrapper script around images - you'll notice images are being served from images.tabulas.co - this is running locally on this machine. What it does is copy the image from S3 and then server it from the primary server. I'm sending along Last-Modified and Etag headers, so I can quickly send out 304s if necessary. This should begin to limit the number of times clients hit S3 directly.

One thing I need to do is write an update script to retrieve metadata for all files - a lot of the older images are missing key pieces of information (Content-type and content-length); those are still being 303-ed back to S3.

Looking at the more detailed usage statistics, images account for about 6 GB/traffic per day - it's *files* which come in roughly 10x that amount (ouch!). So I guess the next step will be to apply this concept to files.

One of the side effects of these changes is it'll allow me to actually set permissions on the files themselves - currently, like with many other consumer sites, friends-only statuses do not apply to images themselves, so if you got an image, you could just pass the URL directly. This'll help towards that.

Comment with Facebook

Want to comment with Tabulas?. Please login.

Roebot (guest)